Artificial Intelligence, Machine Learning, and Control group

AMCO is a research group part of the Department of Information Engineering from the University of Padova

Who we are

We are a research group focused on trustworthy and intelligent AI systems. Our expertise spans algorithmic fairness, reinforcement learning, smart mobility solutions, anomaly detection, continual learning, and explainable AI. We develop advanced computer vision methods—including visual anomaly detection, object recognition (YOLO), weakly supervised learning, and active learning—to build robust and adaptive models that perform reliably in real-world environments.

Research

Advancing machine learning through cutting-edge work in fairness, reinforcement learning, continual learning, and explainability.

Applications

Designing AI for real-world impact, from smart mobility to anomaly detection and robust computer vision systems.

Collaborations

Working with academia, industry, and policymakers to ensure responsible, impactful, and trustworthy AI solutions.

Research Threads

AMCO is a research group dedicated to advancing reinforcement learning and machine learning for real-world impact. Our work spans fundamental algorithms, trustworthy AI, and applied domains such as mobility, anomaly detection, and computer vision.

1. Algorithmic Fairness

Developing methods to ensure fairness and reduce bias in AI systems, enabling equitable decision-making in high-stakes applications.

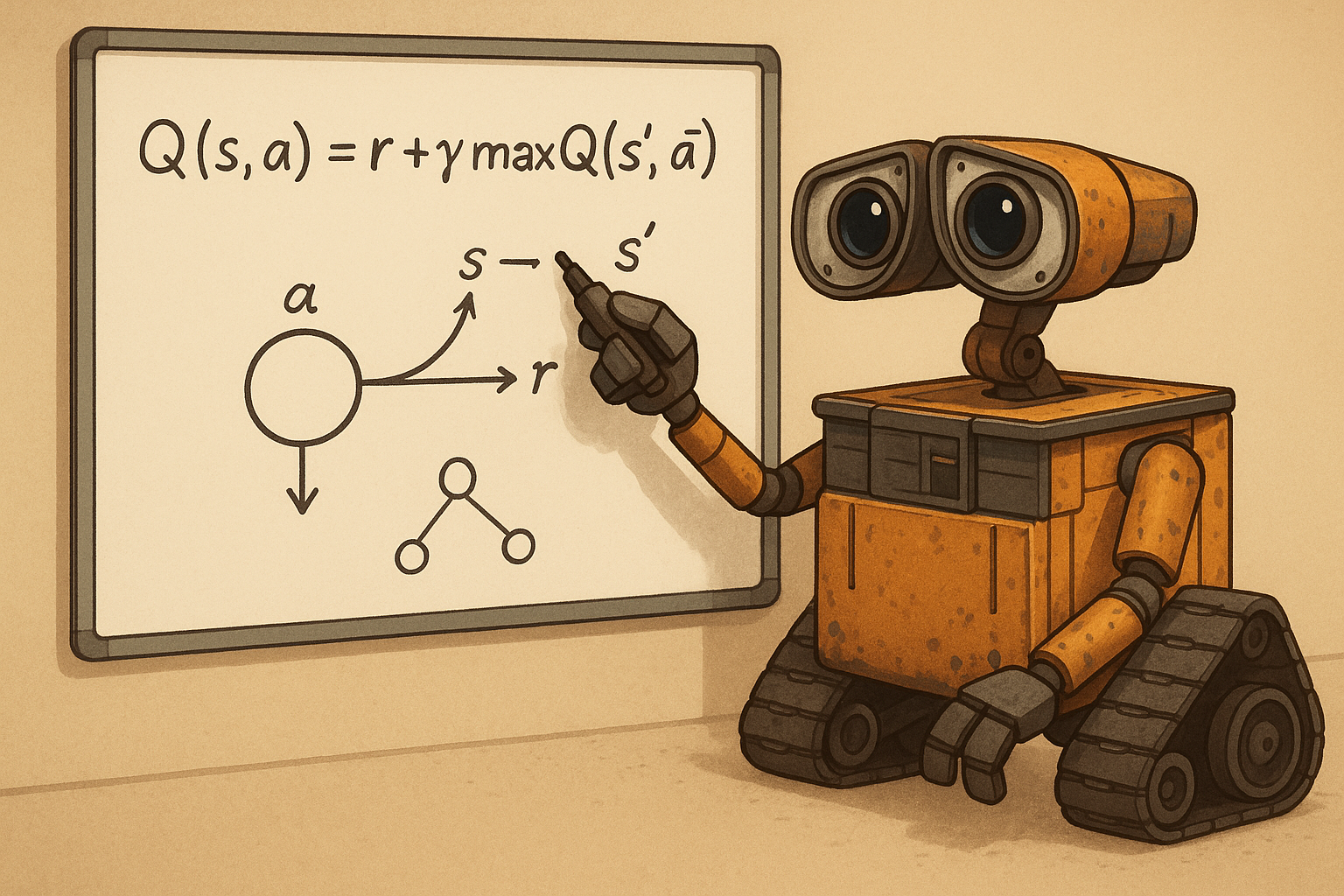

2. Reinforcement Learning

Advancing RL methods for adaptive, robust, and explainable decision-making in dynamic and uncertain environments.

3. Smart Mobility

Applying RL and machine learning to intelligent transportation systems, optimizing traffic flow, reducing congestion, and enabling sustainable mobility solutions.

4. Anomaly Detection

Creating reliable methods for detecting irregularities in data, from sensor failures to cybersecurity threats and industrial monitoring.

5. Continual Learning

Designing models that learn over time without forgetting, enabling AI systems to adapt to evolving tasks and environments.

6. Explainability

Enhancing the transparency and interpretability of machine learning models, making AI decisions more understandable and trustworthy.